Difference between revisions of "Main Page"

(→New here?) |

|||

| Line 2: | Line 2: | ||

<center> | <center> | ||

<font size=5px>''Welcome to the '''explain [[xkcd]]''' wiki!''</font> | <font size=5px>''Welcome to the '''explain [[xkcd]]''' wiki!''</font> | ||

| − | + | Today, the wiki is in read-only mode to allow for a hosting migration. Please enjoy reading all our xkcd explanations. | |

We have an explanation for all [[:Category:Comics|'''{{#expr:{{PAGESINCAT:Comics|R}}-13}}''' xkcd comics]], | We have an explanation for all [[:Category:Comics|'''{{#expr:{{PAGESINCAT:Comics|R}}-13}}''' xkcd comics]], | ||

<!-- Note: the -13 in the calculation above is to discount subcategories (there are 8 of them as of 2013-02-27), | <!-- Note: the -13 in the calculation above is to discount subcategories (there are 8 of them as of 2013-02-27), | ||

Revision as of 13:12, 29 October 2013

Welcome to the explain xkcd wiki! Today, the wiki is in read-only mode to allow for a hosting migration. Please enjoy reading all our xkcd explanations. We have an explanation for all 2 xkcd comics, and only 0 (0%) are incomplete. Help us finish them!

Latest comic

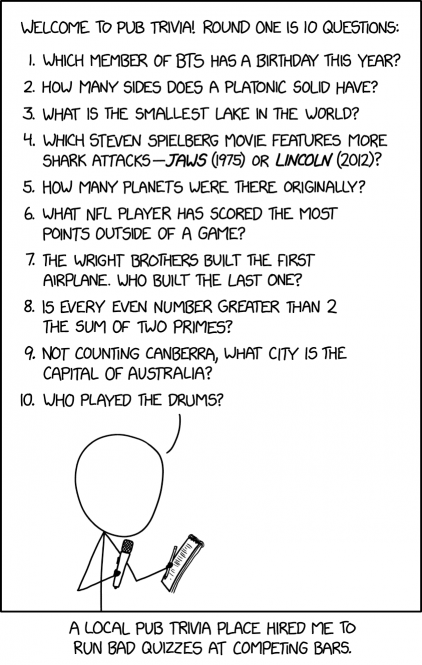

| Pub Trivia |

Title text: Bonus question: Where is London located? (a) The British Isles (b) Great Britain and Northern Ireland (c) The UK (d) Europe (or 'the EU') (e) Greater London |

Explanation

| |

This explanation may be incomplete or incorrect: Created by TRIVIA IS LATIN FOR THREE ROADS - Please change this comment when editing this page. Do NOT delete this tag too soon. |

Many pubs have trivia nights, where patrons form teams and compete to answer questions about a range of topics. The typical goal for trivia games is that they be challenging, yet possible, and so the questions whose answers are too difficult or too easy generally make for a poor game. In addition, it's usually preferable that questions are clearly worded with a single, objective answer, so as to avoid disputes about which answers are correct.

Cueball has apparently been hired by one bar to infiltrate other bars' quiz nights and ask particularly bad questions. The implication is that this will make the games unpleasant, in the hopes that people will leave, and possibly go to the bar that hired Cueball.

Cueball uses a variety of strategies to write bad questions, including questions that are trivial (where the answer is painfully obvious), unanswerable (either because there is no answer, or because the answer is unknown), ambiguously worded, or arguable.

Many of his questions could be altered slightly to make them more reasonable for such a game, but that would defeat Cueball's purpose.

| Question | Problem with the Question | Explanation | More Reasonable Alternative |

|---|---|---|---|

| Which member of BTS has a birthday this year? | Multiple correct answers | All people have birthdays every year (other than pedantic exceptions due to calendar issues or someone dying before their birthday, none of which apply in this case). Therefore, all members of BTS have birthdays this year. | Which member of BTS has a birthday today/this week/this month? Which member of BTS turns a certain age this year? |

| How many sides does a platonic solid have? | Multiple answers, ambiguous language | There are five Platonic solids, with 4, 6, 8, 12, or 20 faces (colloquially called sides) in Euclidean 3-space. The solids have, respectively, 6, 12, 8, 30, and 30 edges (also occasionally called sides colloquially). A more devious quizmaster might actually include this as a trick question with the correct answer being 'zero', since strictly speaking solids do not have 'sides', but that doesn't appear to be the case here. | How many Platonic solids are there? What is the highest number of faces on a Platonic solid? |

| What is the smallest lake in the world? | Arguable | While the largest lakes are relatively straightforward to categorize, smaller bodies of water range in size down to individual puddles. There is no clear, definitional line at which a body goes from being a lake to a pond, for example. In addition, the size of small lakes will fluctuate due to variability in precipitation, and other weather effects, and some lakes only exist for brief periods (intermittent lakes). Hence, which small bodies of water are "lakes" and which is the smallest can't be clearly answered, without specifying a whole list of parameters and standards. | What lake has the largest surface area in the world? What is the world's deepest lake? What lake is recognized by the Guinness World Records as the world's smallest? (Benxi Lake in China). |

| Which Steven Spielberg movie features more shark attacks, Jaws (1975) or Lincoln (2012)? | Trivial | Jaws is a famous movie about a killer shark, and features at least five fatal shark attacks. Lincoln is a movie about the passage of the Thirteenth Amendment to the U.S. Constitution, containing zero shark attacks[citation needed]. Anyone with even a passing familiarity with American popular culture should be able to get this one right, and someone with no knowledge could likely guess the answer from the titles alone. | How many fatal shark attacks occur in "Jaws"? How many times is the shark seen on screen? Which film won more Academy Awards? |

| How many planets were there originally? | Ambiguous | The question doesn't specify a time frame or culture, and also doesn't specify that it's referring to our solar system (in the observable universe, there are almost certainly trillions of planets). Additionally, it asks how many "were there", as opposed to how many planets were known (the number of planets hasn't changed in human history, only the number which are known and defined as such). | How many planets were known to Ancient Greece? How many planets were known to science prior to the invention of the telescope? |

| What NFL player has scored the most points outside of a game? | Ambiguous, Unknowable | The term "scored the most points" generally only applies within the context of a game, making it very unclear what kind of "points" the question is referring to. Does it mean points in non-NFL games? Points in games other than football? Points outside the context of any game at all (such as 'making a point' in conversation)? Even if this were clarified, points scored in official games in professional sports leagues are meticulously recorded and published, points scored in any other context are not, so the question is likely impossible to answer. | Which NFL player scored the most points in a game/season/career? |

| The Wright brothers built the first airplane. Who built the last one? | Unknowable | Orville and Wilbur Wright are widely credited with designing and building the first airplane (in the sense of a heavier-than-air flying machine that could take off, steer and land under its own power). In modern times, design and construction of airplanes has become a huge, international industry, with many airplanes of widely varying sizings being built each year. Since airplanes are built continuously, which one was made most recently depends on when the question is asked (and would be very difficult for the average person to know). If it's asking about the last airplane ever, that's impossible to know, since that plane hasn't been built yet (and hopefully won't for a very long time). Also, the question seems to be asking for a name, but modern airplanes are generally designed and built by companies, without a single person (or even a small number of people) being responsible. | |

| Is every even number greater than 2 the sum of two primes? | Unknown, Possibly unknowable | This is an open question in math, known as Goldbach's conjecture. Mathematicians widely believe that it is true, and it has held true for every number we've checked (and we've checked a great many numbers) but since it's impossible to check every number, we can't assume it's always true. No mathematical proof for its veracity exists at this point. Since it is known that something can be true but impossible to prove or disprove, this may be the situation forever. | |

| Not counting Canberra, what city is the capital of Australia? | No answer exists | Australia has only one capital (unlike some countries, which divide the legislative and administrative capitals, for example), and that capital is Canberra. Hence, by definition, there is no capital "not counting Canberra". | What is the capital of Australia? |

| Who played the drums? | Trivial | As worded, the question could be answered with anyone who's ever played the drums, in any context, whether professional or not, in all of history. This would include a huge number of people, most of whom would not be well-known. Most people would be able to offer a technically correct answer, and almost none of them would be interesting. | Who played the drums for some specific band/album/track? |

| (Alt-Text) Where is London located? (a) The British Isles (b) Great Britain and Northern Ireland (c) The UK (d) Europe (or 'the EU') (e) Greater London | Multiple answers | All choices are technically correct as they are various geographical areas that include the city of London, England. The second to last choice, however, is both correct and incorrect, as conflates Europe and the EU. The United Kingdom (and therefore London) left the European Union in 2020, but is still generally categorized as being part of Europe, geographically |

Transcript

| |

This transcript is incomplete. Please help editing it! Thanks. |

- [Cueball holding a microphone and reading from a sheet of paper]:

- Welcome to pub trivia! Round one is 10 questions:

- Which member of BTS has a birthday this year?

- How many sides does a platonic solid have?

- What is the smallest lake in the world?

- Which Steven Spielberg movie features more shark attacks - Jaws (1975) or Lincoln (2012)?

- How many planets were there originally?

- What NFL player has scored the most points outside of a game?

- The Wright brothers built the first airplane. Who built the last one?

- Is every even number greater than 2 the sum of two primes?

- Not counting Canberra, what city is the capital of Australia?

- Who played the drums?

- [Caption below the panel]:

- A local pub trivia place hired me to run bad quizzes at competing bars.

Is this out of date? .

New here?

Last 7 days (Top 10) |

||||||||||||||||||||||||||||||||||||||||||||||||||

|

You can read a brief introduction about this wiki at explain xkcd. Feel free to sign up for an account and contribute to the wiki! We need explanations for comics, characters, themes, memes and everything in between. If it is referenced in an xkcd web comic, it should be here.

- If you're new to wikis like this, take a look at these help pages describing how to navigate the wiki, and how to edit pages.

- Discussion about various parts of the wiki is going on at Explain XKCD:Community portal. Share your 2¢!

- List of all comics contains a table of most recent xkcd comics and links to the rest, and the corresponding explanations. There are incomplete explanations listed here. Feel free to help out by expanding them!

- If you see that a new comic hasn't been explained yet, you can create it: Here's how.

- We sell advertising space to pay for our server costs. To learn more, go here.

Rules

Don't be a jerk. There are a lot of comics that don't have set in stone explanations; feel free to put multiple interpretations in the wiki page for each comic.

If you want to talk about a specific comic, use its discussion page.

Please only submit material directly related to —and helping everyone better understand— xkcd... and of course only submit material that can legally be posted (and freely edited). Off-topic or other inappropriate content is subject to removal or modification at admin discretion, and users who repeatedly post such content will be blocked.

If you need assistance from an admin, post a message to the Admin requests board.