Difference between revisions of "2295: Garbage Math"

(→Transcript) |

(→Explanation) |

||

| Line 12: | Line 12: | ||

Some of these rules correspond to the rules of floating point arithmetic (https://en.wikipedia.org/wiki/Floating-point_arithmetic), while others may be inspired by the rules of propagation of uncertainty (https://en.wikipedia.org/wiki/Propagation_of_uncertainty#Example_formulae) where a "garbage" number would correspond to an estimate with a high degree of uncertainty, and the uncertainty of the result of arithmetic operations will tend to be dominated by the term with the highest uncertainty. The rule about N pieces of independent garbage reflects the central limit theorem (https://en.wikipedia.org/wiki/Central_limit_theorem) and how it predicts that the uncertainty (or standard error https://en.wikipedia.org/wiki/Standard_error) of an estimate will be reduced when independent estimates are averaged. | Some of these rules correspond to the rules of floating point arithmetic (https://en.wikipedia.org/wiki/Floating-point_arithmetic), while others may be inspired by the rules of propagation of uncertainty (https://en.wikipedia.org/wiki/Propagation_of_uncertainty#Example_formulae) where a "garbage" number would correspond to an estimate with a high degree of uncertainty, and the uncertainty of the result of arithmetic operations will tend to be dominated by the term with the highest uncertainty. The rule about N pieces of independent garbage reflects the central limit theorem (https://en.wikipedia.org/wiki/Central_limit_theorem) and how it predicts that the uncertainty (or standard error https://en.wikipedia.org/wiki/Standard_error) of an estimate will be reduced when independent estimates are averaged. | ||

| + | |||

| + | This comic is probably not COVID-19 related (though arguably it could be related to doing statistical analyses with the varying quality of data related to the disease), meaning that the 19 comic streak preceding this on topics relating to COVID-19 is probably broken. | ||

| + | |||

| + | This comic is about the propagation of errors in numerical analysis and statistics, but described in much more colloquial terms. Numbers with low precision are termed as "garbage" and numbers with high precision are termed as "precise numbers". | ||

| + | |||

| + | {| class="wikitable" | ||

| + | !Formula | ||

| + | !Explanation | ||

| + | |- | ||

| + | |Precise number + Precise number = Slightly less precise number | ||

| + | |If we know absolute error bars, then adding two precise numbers will at worst add the sizes of the two error bars. For example, if our precise numbers are 1 (±10<sup>-6</sup>) and 1 (±10<sup>-6</sup>), then our sum is 2 (±2·10<sup>-6</sup>). It is possible to lose a lot of relative precision, if the resultant sum is close to zero as a result of adding a number and then close to its inverse. This phenomenon is known as catastrophic cancellation. Therefore, it is likely that all numbers referred here are positive numbers, which does not exhibit this phenomenon. | ||

| + | |- | ||

| + | |Precise number × Precise number = Slightly less precise number | ||

| + | |Here, instead of absolute error, relative error will be added. For example, if our precise numbers are 1 (±10<sup>-6</sup>) and 1 (±10<sup>-6</sup>), then our product is 1 (±2·10<sup>-6</sup>). | ||

| + | |- | ||

| + | |Precise number + Garbage = Garbage | ||

| + | |If one of the numbers has a high absolute error, and the numbers being added are of comparable size, then this error will be propagated to the sum. | ||

| + | |- | ||

| + | |Precise number × Garbage = Garbage | ||

| + | |Likewise, if one of the numbers has a high relative error, then this error will be propagated to the sum. Here, this is independent of the sizes of the numbers. | ||

| + | |- | ||

| + | |<math>\sqrt{\text{Garbage}} = \text{Less bad garbage}</math> | ||

| + | | When a number is square rooted, its relative error will be halved. | ||

| + | |- | ||

| + | |Garbage<sup>2</sup> = Worse garbage | ||

| + | |Likewise, when a number is squared, its relative error will be doubled. This is a corollary to multiplication adding relative errors. | ||

| + | |- | ||

| + | |<math>\frac{1}{N}\sum(\text{N pieces of statistically independent garbage}) = \text{Better garbage}</math> | ||

| + | |By aggregating many pieces of statistically independent observations (for instance, surveying many individuals), it is possible to reduce relative error. This is the basis of statistical sampling. | ||

| + | |- | ||

| + | |Precise number<sup>Garbage</sup> = Much worse garbage | ||

| + | |The exponent is very sensitive to changes, which may also magnify the effect based on the magnitude of the precise number. | ||

| + | |- | ||

| + | |Garbage - Garbage = Much worse garbage | ||

| + | |This line involves catastrophic cancellation. If both pieces of garbage are about the same (e.g. if their error bars overlap), then it is possible that the answer is positive, zero, or negative. | ||

| + | |- | ||

| + | |<math>\frac{\text{Precise number}}{\text{Garbage}-\text{Garbage}}</math>=Much worse garbage, possible division by zero | ||

| + | |Indeed, as with above, if error bars overlap then we might end up dividing by zero. | ||

| + | |- | ||

| + | |Garbage × 0 = Precise number | ||

| + | |Multiplying anything by 0 results in 0, an extremely precise number in the sense that it has no error whatsoever since we supply the 0 ourselves. This is equivalent to discarding garbage data from a statistical analysis. | ||

| + | |||

| + | The titletext refers to the computer science maxim of Garbage in, garbage out, which states that even if some code accurately does what it is supposed to do, supplying incorrect data will result in incorrect results. As we can see above, however, when plugging data into mathematical formulas, this can possibly magnify the error of our input data, though there are ways to reduce this error (such as aggregating data). Therefore, the quantity of garbage is not necessarily conserved. | ||

==Transcript== | ==Transcript== | ||

Revision as of 17:55, 17 April 2020

| Garbage Math |

Title text: 'Garbage In, Garbage Out' should not be taken to imply any sort of conservation law limiting the amount of garbage produced. |

Explanation

| |

This explanation may be incomplete or incorrect: Created by a ZILOG Z80. Please mention here why this explanation isn't complete. Do NOT delete this tag too soon. If you can address this issue, please edit the page! Thanks. |

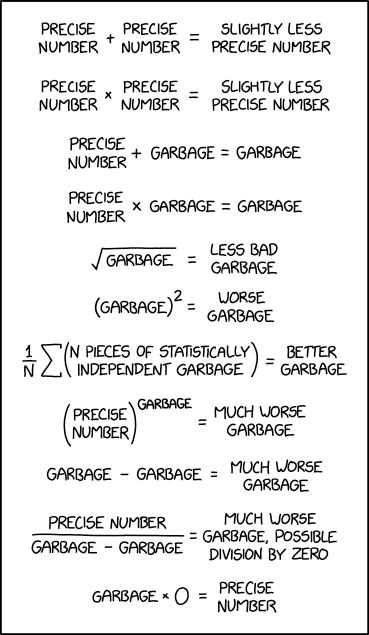

This comic explains the "garbage in, garbage out" concept using arithmetical expressions. Just like the comic says, if you get garbage in any part of your workflow, you get garbage as a result.

Some of these rules correspond to the rules of floating point arithmetic (https://en.wikipedia.org/wiki/Floating-point_arithmetic), while others may be inspired by the rules of propagation of uncertainty (https://en.wikipedia.org/wiki/Propagation_of_uncertainty#Example_formulae) where a "garbage" number would correspond to an estimate with a high degree of uncertainty, and the uncertainty of the result of arithmetic operations will tend to be dominated by the term with the highest uncertainty. The rule about N pieces of independent garbage reflects the central limit theorem (https://en.wikipedia.org/wiki/Central_limit_theorem) and how it predicts that the uncertainty (or standard error https://en.wikipedia.org/wiki/Standard_error) of an estimate will be reduced when independent estimates are averaged.

This comic is probably not COVID-19 related (though arguably it could be related to doing statistical analyses with the varying quality of data related to the disease), meaning that the 19 comic streak preceding this on topics relating to COVID-19 is probably broken.

This comic is about the propagation of errors in numerical analysis and statistics, but described in much more colloquial terms. Numbers with low precision are termed as "garbage" and numbers with high precision are termed as "precise numbers".

| Formula | Explanation | ||

|---|---|---|---|

| Precise number + Precise number = Slightly less precise number | If we know absolute error bars, then adding two precise numbers will at worst add the sizes of the two error bars. For example, if our precise numbers are 1 (±10-6) and 1 (±10-6), then our sum is 2 (±2·10-6). It is possible to lose a lot of relative precision, if the resultant sum is close to zero as a result of adding a number and then close to its inverse. This phenomenon is known as catastrophic cancellation. Therefore, it is likely that all numbers referred here are positive numbers, which does not exhibit this phenomenon. | ||

| Precise number × Precise number = Slightly less precise number | Here, instead of absolute error, relative error will be added. For example, if our precise numbers are 1 (±10-6) and 1 (±10-6), then our product is 1 (±2·10-6). | ||

| Precise number + Garbage = Garbage | If one of the numbers has a high absolute error, and the numbers being added are of comparable size, then this error will be propagated to the sum. | ||

| Precise number × Garbage = Garbage | Likewise, if one of the numbers has a high relative error, then this error will be propagated to the sum. Here, this is independent of the sizes of the numbers. | ||

|

When a number is square rooted, its relative error will be halved. | ||

| Garbage2 = Worse garbage | Likewise, when a number is squared, its relative error will be doubled. This is a corollary to multiplication adding relative errors. | ||

|

By aggregating many pieces of statistically independent observations (for instance, surveying many individuals), it is possible to reduce relative error. This is the basis of statistical sampling. | ||

| Precise numberGarbage = Much worse garbage | The exponent is very sensitive to changes, which may also magnify the effect based on the magnitude of the precise number. | ||

| Garbage - Garbage = Much worse garbage | This line involves catastrophic cancellation. If both pieces of garbage are about the same (e.g. if their error bars overlap), then it is possible that the answer is positive, zero, or negative. | ||

=Much worse garbage, possible division by zero =Much worse garbage, possible division by zero

|

Indeed, as with above, if error bars overlap then we might end up dividing by zero. | ||

| Garbage × 0 = Precise number | Multiplying anything by 0 results in 0, an extremely precise number in the sense that it has no error whatsoever since we supply the 0 ourselves. This is equivalent to discarding garbage data from a statistical analysis.

The titletext refers to the computer science maxim of Garbage in, garbage out, which states that even if some code accurately does what it is supposed to do, supplying incorrect data will result in incorrect results. As we can see above, however, when plugging data into mathematical formulas, this can possibly magnify the error of our input data, though there are ways to reduce this error (such as aggregating data). Therefore, the quantity of garbage is not necessarily conserved. Transcript

[A series of mathematical equations are written from top to bottom] PRECISE NUMBER + PRECISE NUMBER = SLIGHTLY LESS PRECISE NUMBER PRECISE NUMBER x PRECISE NUMBER = SLIGHTLY LESS PRECISE NUMBER PRECISE NUMBER + GARBAGE = GARBAGE PRECISE NUMBER x GARBAGE = GARBAGE GARBAGE [inside square root symbol] = LESS BAD GARBAGE (GARBAGE)2 [superscript '2' as exponentiation] = WORSE GARBAGE 1/N [Greek letter Sigma] (N PIECES OF STATISTICALLY INDEPENDENT GARBAGE) = BETTER GARBAGE (PRECISE NUMBER)GARBAGE [superscript 'GARBAGE' as exponentiation] = MUCH WORSE GARBAGE GARBAGE - GARBAGE = MUCH WORSE GARBAGE PRECISE NUMBER _________________ = MUCH WORSE GARBAGE, POSSIBLE DIVISION BY ZERO GARBAGE - GARBAGE GARBAGE x 0 = PRECISE NUMBER

DiscussionInclusion in SeriesThis is not a Covid19 comic. One could think that this is a comment on the difficulties of modeling the corona virus outbreak, but since discussions of exponential functions are only a small part in the comic I believe it is just a general comment on floating point arithmetic mixed in with statistical considerations. --108.162.229.242 17:28, 17 April 2020 (UTC)

--141.101.69.153 21:53, 19 April 2020 (UTC)

Math and Error barsWell this is surprising came here thinking I understood it just to see what the discussion looked like. Ended up learning something new. I was able to understand intuitively the comic. But this is my first exposure to actually doing math on the error bars. I think I was supposed to do that in college but I don't remember anyone ever explaining how it should work. --162.158.63.208 18:14, 17 April 2020 (UTC) In recent days, there have been a number of math "quizzes" in this same type of format, albeit generally with only addition and maybe multiplication, appearing on Facebook. Should the explanation include a reference to this as a possible contributing reason for Randall's comic? One could also argue that those quizzes have been appearing on Facebook as a way to spend/waste time during the coronavirus pandemic lock-down, making he comic at least tangentially related to Covid19 LIES.

What's the difference between relative error and absolute error? I don't understand these terms. Maybe add?

Are all of these equations consistent with garbage = infinity?

Would the summation divided by n just give you the arithmatic mean of the data set? Nutster (talk) 01:55, 18 April 2020 (UTC)

The statement that NaN^0 isn't fully justified and I'm not clear it belongs. Djbrasier (talk) 18:46, 18 April 2020 (UTC)

I'm concerned that, with "Precise Number" there's the usual confusion between Accuracy and Precision (edit: and of course Resolution, too!). A precise number can still be utter garbage, as 84.7489327(646475)% of all mathematicians could tell you. 162.158.111.241 13:59, 19 April 2020 (UTC)

Could someone please double check that the given uncertainty formula for "Precise number / ( Garbage – Garbage )" at the second to the bottom is correct? I'm not sure it properly accommodates the uncertainty of the numerator. 162.158.255.64 07:48, 20 April 2020 (UTC) Are the changes from "=" to "≈" correct? Either way, isn't the proper symbol for the relation "≅" ("approximately equal to") instead of "≈" ("almost equal to")? As is illustrated by catastrophic cancellation, an approximation may not be "almost" correct. But my question is, aren't those relations to the resulting standard deviation exact instead of approximate? 172.69.22.152 04:16, 22 April 2020 (UTC)

Are the results truly correct? Wouldn't the final sum and product standard deviations be √2 10^6 ? This comic makes me think about the "Garbage In, Garbage Out" rule of programming as well. Probably unrelated, but it just came to mind. Sarah the Pie(yes, the food) (talk) 11:14, 9 May 2021 (UTC)

|