Talk:2311: Confidence Interval

Revision as of 10:56, 26 May 2020 by 162.158.158.237 (talk)

What's a millisigma? 162.158.107.209 03:31, 26 May 2020 (UTC)Ven

- Not an official scientific term - most likely referring to standard deviation. One standard deviation, or sigma, is the 68.3 % of values lying around the mean in a normal distribution. A millisigma in a standard deviation would be .0683 % of a normal distribution so that much variation would be bad? Not sure. 172.69.63.203 05:23, 26 May 2020 (UTC)

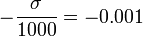

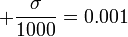

- Actually, if you integrate a normal distribution

from

from  to

to  , you'll get a range of about 0.08% of all values. This would be bad because it would mean that, as big as the confidence interval appears in the picture, the more meaningful 1- or 3-sigma interval (whose size represents the uncertainty of the model) would be larger by a factor of 1250 or 3750, respectively. --Koveras (talk) 08:38, 26 May 2020 (UTC)

, you'll get a range of about 0.08% of all values. This would be bad because it would mean that, as big as the confidence interval appears in the picture, the more meaningful 1- or 3-sigma interval (whose size represents the uncertainty of the model) would be larger by a factor of 1250 or 3750, respectively. --Koveras (talk) 08:38, 26 May 2020 (UTC)

- Actually, if you integrate a normal distribution

- Perhaps you heard about Six Sigma, a quality method used by General Electric (among others) to keep specifications and processes within tiny tolerances. The six sigmas mean that even absolute (so-called) outliers in your production are within the strict tolerances. With milli-sigmas it is extremely seldom to get an acceptable result at all. Sebastian --108.162.229.234 10:53, 26 May 2020 (UTC)

Can it be related to Covid19 pandemia and all those graphs that try to predict if it is in decline or not? Tkopec (talk) 08:27, 26 May 2020 (UTC)

- No. But maybe it's related to the recent Mt. St. Helens comic... :p Seriously, not everything has to be related to the hot-button topic of the day.

- Au contraire, mes amis, it is obvious to me that 1: Barrel - Part 1 is about socially isolating away from the virus. (Remember to sign?) 162.158.158.237 10:56, 26 May 2020 (UTC)