Difference between revisions of "Main Page"

(calc fix (thanks Omega!)) |

(try DISPLAYTITLE) |

||

| Line 1: | Line 1: | ||

__NOTOC__ | __NOTOC__ | ||

| + | {{DISPLAYTITLE:explain xkcd}} | ||

<center> | <center> | ||

Revision as of 21:24, 11 August 2012

Welcome to the explain xkcd wiki! We already have 12 comic explanations!

(But there are still 2913 to go. Come and add yours!)

Latest comic

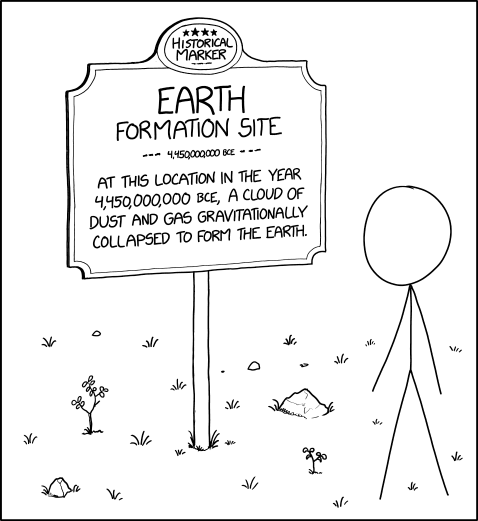

| Earth Formation Site |

Title text: It's not far from the sign marking the exact latitude and longitude of the Earth's core. |

Explanation

| |

This explanation may be incomplete or incorrect: Created by a BOT - Please change this comment when editing this page. Do NOT delete this tag too soon. |

This comic depicts Cueball looking at a historical event marking sign, which in this case marks the creation of the earth. These signs are often used to mark and explain the sites of historical events, but rarely include events such as the creation of the planet, nor do they often include dates in excess of 4 billion years.

Transcript

| |

This transcript is incomplete. Please help editing it! Thanks. |

New here?

Feel free to sign up for an account and contribute to the explain xkcd wiki! We need explanations for comics, characters, themes, memes and everything in between. If it is referenced in an xkcd web comic, it should be here.

- If you're new to wikis like this, take a look at these help pages describing how to navigate the wiki, and how to edit pages.

- Discussion about various parts of the wiki is going on at Explain XKCD:Community portal. Share your 2¢!

- List of all comics contains a complete table of all xkcd comics so far and the corresponding explanations. The red links (like this) are missing explanations. Feel free to help out by creating them!

Rules

Don't be a jerk. There are a lot of comics that don't have set in stone explanations, feel free to put multiple interpretations in the wiki page for each comic.

If you want to talk about a specific comic, use its discussion page.

Please only submit material directly related to—and helping everyone better understand—xkcd... and of course only submit material that can legally be posted (and freely edited.) Off-topic or other inappropriate content is subject to removal or modification at admin discretion, and users posting such are at risk of being blocked.

If you need assistance from an admin, feel free to leave a message on their personal discussion page. The list of admins is here.

Logo

Explain xkcd logo courtesy of User:Alek2407.