Main Page

Welcome to the explain xkcd wiki!

We have collaboratively explained 5 xkcd comics, and only 2920 (100%) remain. Add yours while there's a chance or extend incomplete descriptions!

Latest comic

| Earth Formation Site |

Title text: It's not far from the sign marking the exact latitude and longitude of the Earth's core. |

Explanation

| |

This explanation may be incomplete or incorrect: Created by A 4,450,002,024 YEAR OLD BALL OF DUST AND GAS - Please change this comment when editing this page. Do NOT delete this tag too soon. |

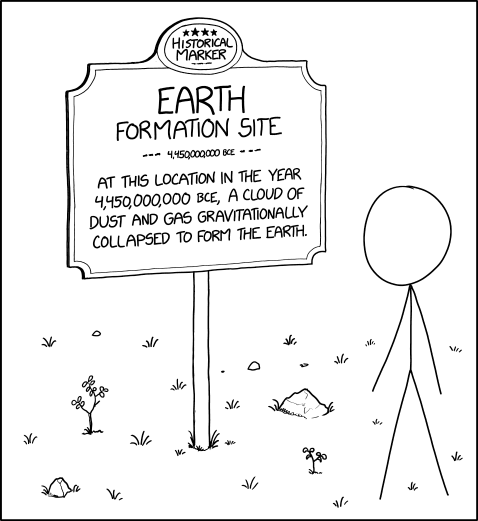

In this comic, Cueball stands in front of a sign that declares itself to be an historical location, the formation of the Earth. The absurd humor of the comic is threefold:

- the impossibility of knowing where exactly the Earth formed.

- the impossibly precise date

- the frequent imprecision of historical markers

First, the Earth formed at (or near) the center of current Earth, so this sign is technically above the right spot — but since every location on Earth is above the right spot, the location of the sign is not very specific, similar to other historical markers of events - such as a famous battle — that happened over a much larger area.

Even if an omniscient observer wanted to mark the spot in space where the Earth started forming, they would have trouble due to Sun's 225-million year long orbit around the center of the Milky Way galaxy and the movement of the galaxy itself through space relative to other objects. From this perspective, the Earth’s formation did not occur anywhere on Earth.

Secondly, the date on the sign is also ridiculously precise, in keeping with the information usually found on historical markers but absurd in the context of the tens or hundreds of millions of years thought to be required for planet formation. It would require some specific definition of when the gradually-coalescing mass could be considered a planet, as well as the ability to determine when that mass met the definition. The date shown for the formation of the Earth, 4.45 billion years, also differs from the commonly accepted date, 4.54 (±0.05) billion years. The difference lies in the transposition of two digits, which is likely due to an error in the sign rather than a mistake on Randall's part.

Thirdly, this comic is also poking fun at the norms and frequent flaws of historical markers. Typically, these signs are placed at precise locations where historical, religious and even mythological events happened (such as where battles have been fought, or where people of note were born, or resided, or accomplished something, or died, or where something supposedly happened). In some cases, multiple locations lay "claim" to events whose true locations are uncertain.

The title text refers to the 'coordinates of the Earth's core'. This is similar to signs marking specific latitudes, longitudes or other notable locations. But, since all coordinates, when superimposed on a globe, theoretically converge at the Earth's core, this reinforces the idea that no singular location can be picked as the exact location where the Earth formed.

Transcript

| |

This transcript is incomplete. Please help editing it! Thanks. |

- [Cueball is standing in front of a sign in a field of grass. Rocks and plants are scattered across the ground. The sign reads:]

- HISTORICAL MARKER

- EARTH

- FORMATION SITE

- --- 4,450,000,000 BCE ---

- At this location in the year 4,450,000,000 BCE, a cloud of dust and gas gravitationally collapsed to form the Earth.

Is this out of date? .

New here?

Last 7 days (Top 10) |

|||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

You can read a brief introduction about this wiki at explain xkcd. Feel free to sign up for an account and contribute to the wiki! We need explanations for comics, characters, themes, memes and everything in between. If it is referenced in an xkcd web comic, it should be here.

- If you're new to wikis like this, take a look at these help pages describing how to navigate the wiki, and how to edit pages.

- Discussion about various parts of the wiki is going on at Explain XKCD:Community portal. Share your 2¢!

- List of all comics contains a complete table of all xkcd comics so far and the corresponding explanations. The missing explanations are listed here. Feel free to help out by creating them! Here's how.

Rules

Don't be a jerk. There are a lot of comics that don't have set in stone explanations; feel free to put multiple interpretations in the wiki page for each comic.

If you want to talk about a specific comic, use its discussion page.

Please only submit material directly related to —and helping everyone better understand— xkcd... and of course only submit material that can legally be posted (and freely edited.) Off-topic or other inappropriate content is subject to removal or modification at admin discretion, and users who repeatedly post such content will be blocked.

If you need assistance from an admin, post a message to the Admin requests board.