1866: Russell's Teapot

| Russell's Teapot |

Title text: Unfortunately, NASA regulations state that Bertrand Russell-related payloads can only be launched within launch vehicles which do not launch themselves. |

Explanation[edit]

Russell's Teapot is a philosophical argument that reflects on the difficulty of trying to prove a negative. It involves a hypothetical teapot orbiting a heavenly body, whose existence hasn't been proven, and states that it cannot be disproven (somebody put it there secretly?). While an instrument could be theoretically engineered to pick out a teapot-sized object of any luminosity, the teapot would be very easy to confuse for other pieces of space debris, and the space to search is extremely large; the task is thus akin to the proverbial search for a needle in a haystack.

Bertrand Russell devised this analogy "to illustrate that the philosophic burden of proof lies upon a person making unfalsifiable claims, rather than shifting the burden of disproof to others." As such, Russell's teapot is very often used in atheistic arguments.

"He wrote that if he were to assert, without offering proof, that a teapot orbits the Sun somewhere in space between the Earth and Mars, he could not expect anyone to believe him solely because his assertion could not be proven wrong." (Wikipedia)

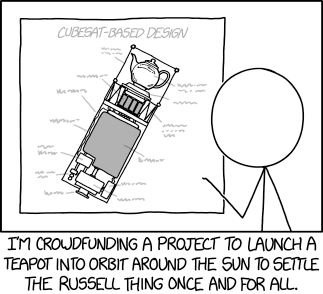

Cueball is trying to settle the teapot argument by actually launching a teapot into space via a crowdfunding campaign. This misses the point of Russell's argument, which is about unfalsifiable claims in rhetoric and not a literal teapot.

"CubeSat-based design" refers to a type of miniaturized satellites that is made up of 10-centimeter cube units (here seemingly consisting of 3 units) and enables cost-effective means for getting a payload into orbit.

The title-text refers to Russell's paradox, also formulated by Bertrand Russell. Russell's paradox was a flaw found in naïve set theory where one could consider "the set of all sets that do not contain themselves" (a "set" is a mathematical term for a "group of things" -- "things" in this case including a set itself). The paradox arises with whether this set, in turn, contains itself: if it does, then it cannot; if it doesn't, then it must. Similarly, like in the barber paradox, the vehicle which launches only vehicles which do not launch themselves is impossible: if the vehicle takes off, it must launch itself as well as the teapot, and thus can never be launched (without violating alleged NASA regulations, at least). That said, he might get around those regulations by using an initial first stage with an offboard power source for the moment of launch, for example a laser striking a parabolic mirror and massively heating air beneath the craft, causing expansion, or a compressed gas cold launch system such as used to clear submarine launched missiles from their tubes before the real rocket motor ignites.

The barber paradox can be stated as follows: "Consider a town in which a man, the barber, shaves precisely those men who do not shave themselves. Does the barber shave himself?" Either answer, yes or no, leads to a contradiction. Sometimes the paradox is incorrectly stated, replacing "precisely those" with "only". Under that scenario, there is no paradox; the barber is merely unkempt.

There is, however, a solution in this case. Instead of launching itself, the teapot-containing vehicle may be fired from a space gun, catapult, or other launcher, and then boost itself the rest of the way. This, while true for the CubeSats themselves, is not true for their carrier.

Randall has talked about CubeSats in later comics as well, specifically in 1992: SafetySat and 2148: Cubesat Launch.

Potential List of Labeled Items[edit]

From the top right, clockwise.

| Item # | Possible Label | Possible Description |

|---|---|---|

| 1 | Teapot | Classic teapot, the point of the satellite. |

| 2 | Base | Holds Teapot in Place |

| 3 | Vehicle Equipment Bay | With foldable antenna and stabilizers |

| 4 | Fuel | |

| 5 | Milk / Lemon Juice | add to taste. Either/Or |

| 6 | Combustion Chamber | |

| 7 | Nozzle | |

| 8 | Micro-USB connector | To charge the Battery |

| 9 | Battery | Powers the Heater Unit (q.v.) |

| 10 | ||

| 11 | ||

| 12 | Heater Unit | To keep the tea from freezing |

| 13 | Display Cabinet | Protects the teapot from micrometeorites |

Transcript[edit]

- [Cueball is standing in front of a blueprint labeled "CubeSat-Based Design", containing a satellite with a teapot in the top.]

- [Caption below the panel:]

- I'm crowdfunding a project to launch a teapot into orbit around the sun to settle the Russell thing once and for all.

Discussion

In this case, nesting the teapot in a catapult/cannon which is launched by another catapult/cannon might perhaps be sufficient to get past NASA regulations. (Catapults/cannons only launching the payload and not themselves...) --Nialpxe, 2017. (Arguments welcome)

- Though there's still the matter of an equal and opposite force pushing the satellite away from its gravitational bonds of the catapult. Even if the 2nd catapult is no longer associated with the Earth or Earth's gravity, the catapult will continue to be a launcher. That's just changing what it is launching *from*. 172.68.58.125 18:31, 24 July 2017 (UTC)ColinHeico

- But make sure it is a mobile cannon, otherwise it would not qualify as a launch vehicle. 162.158.89.19 11:32, 21 July 2017 (UTC)

- I immediately thought "railgun". And the payload can still be a rocket; once it's not touching the ground it's accelerating, not launching. (Also Russell failed to account for female barbers. Honestly, people!) 108.162.241.4 09:42, 22 July 2017 (UTC)

- One such company did exist, Quicklaunch had the idea of launching via a space gun. https://en.m.wikipedia.org/wiki/Quicklaunch 172.68.141.142 (talk) (please sign your comments with ~~~~)

- I immediately thought "railgun". And the payload can still be a rocket; once it's not touching the ground it's accelerating, not launching. (Also Russell failed to account for female barbers. Honestly, people!) 108.162.241.4 09:42, 22 July 2017 (UTC)

- He didn't need to account for female barbers (or anybody who isn't a man) because the barber in the paradox shaves precisely those men who don't shave themselves. He only shaves men, and all men in the town are only shaved by him or themselves. Everyone else is a completely different story, so they can be shaved by whoever they want (except the barber, who only shaves men). 108.162.241.88 00:14, 23 July 2017 (UTC)

- Only if you assume that females who are barbers don't shave their legs, armpits, or their various lady parts. This only further confuses the paradox. -- Mjm87 (talk) (please sign your comments with ~~~~)

- For much of Bertrand Russell's life, they didn't. http://mentalfloss.com/article/22511/when-did-women-start-shaving-their-pits 108.162.241.4 09:42, 22 July 2017 (UTC)

- Only if you assume that females who are barbers don't shave their legs, armpits, or their various lady parts. This only further confuses the paradox. -- Mjm87 (talk) (please sign your comments with ~~~~)

- Why are we even bringing up the argument of female barbers when the description of the paradox, at least as phrased within this article, specifically states that the barber is a man? —CsBlastoise (talk) 18:30, 4 December 2017 (UTC)

- Never mind, I just checked the page history, and it appears there was no description of the barber paradox in this article at the time the majority of the preceding comments were written. —CsBlastoise (talk) 18:56, 4 December 2017 (UTC)

- You wouldn't even need a cannon/catapult. If you put the satellite on a small rocket, and put that on a much larger rocket, you can have the big one launch itself, the smaller one, and the satellite. The regulation only says the satellite must be in a non-self-launching launch vehicle. It doesn't say it can't *also* be in a self-launching launch vehicle. -- 108.162.246.113 20:06, 24 July 2017 (UTC)

When I first saw this comic I immediately thought of the Utah Teapot, it's a model used in computer graphics because it's simple and has both convex and concave surfaces. Both teapots, I would assume, (I've only just heard of Russel's Teapot so I could be wrong) are well known to different parts of the nerd community? 162.158.255.22 (talk) (please sign your comments with ~~~~)

Hopefully it will support HTCPCP-TEA. 108.162.241.34 17:48, 21 July 2017 (UTC)

i think people just really like teapot examples 108.162.246.23 (talk) (please sign your comments with ~~~~)

- The major problem here is that CubeSats are currently only launched into Low Earth Orbit (LEO) and are expected to re-enter the atmosphere within days to weeks. Russell's teapot is (allegedly) in orbit between Earth and Mars and Cueball's device is not likely to have enough delta-v to leave Earth orbit. SteveBaker (talk) 18:18, 21 July 2017 (UTC)

"A teapot orbits the Sun somewhere in space between the Earth and Mars" This implies that the teapot is physically located between Mars and Earth at all times. Which if true would be a highly irregular orbit requiring constant velocity changes, which is an impossible feat to achieve with current teapot technology. -- Mjm87 (talk) (please sign your comments with ~~~~)

- Nonsense. It would be a highly regular orbit and many asteroids are already there, despite the most of them are between Mars and Jupiter (Asteroid-Belt):--Dgbrt (talk) 21:22, 21 July 2017 (UTC)

- Since we're nitpicking. Having velocity changes does not preclude being in orbit: objects in orbit are always accelerating. Having a constant velocity change does preclude being in orbit, but it also precludes remaining between Earth and Mars, since it would result in eventually leaving the solar system.--172.68.54.112 19:45, 24 July 2017 (UTC)

- Still nonsense. The mean velocity of an (elliptic) orbit is constant, only the direction is changing. And there are many asteroids in stable orbits between Earth and Mars. Leaving the solar system would require many energy at those orbits, all human build probes (Pioneer, Voyager and New Horizons) had to use gravity assist at Jupiter to reach this target.--Dgbrt (talk) 14:12, 26 July 2017 (UTC)

- It sounds to me like you're missing the interpretation Mjm87 is trying to share. Yes, the way Russell meant it was that Russell's Teapot is between Mars and Earth in the same way that Earth is between Mars and the Sun, that this teapot is in a larger orbit than Earth and smaller than Mars. Mjm87's interpretation adds the idea that not only is it in such an orbit, but also in a direct line in between, always. In other words, that someone looking at Mars through a powerful telescope would always be able to see Russell's Teapot "in the way", like a little Mars eclipse. :) Staying in that spot would indeed take strange acceleration. I'm no astrophysicist or anything, but I imagine if I think of our galaxy as a clock face, with Earth always at the 12 o'clock position, that Mars would at some point be at 3 o'clock, at another time be at 9 o'clock, etc. (of course this is a 2D intepretation of a 3D situation, but I hope you get my point. Actually the third dimension would make this orbit even stranger) NiceGuy1 (talk) 05:16, 28 July 2017 (UTC)

- Still nonsense. The mean velocity of an (elliptic) orbit is constant, only the direction is changing. And there are many asteroids in stable orbits between Earth and Mars. Leaving the solar system would require many energy at those orbits, all human build probes (Pioneer, Voyager and New Horizons) had to use gravity assist at Jupiter to reach this target.--Dgbrt (talk) 14:12, 26 July 2017 (UTC)

- Since we're nitpicking. Having velocity changes does not preclude being in orbit: objects in orbit are always accelerating. Having a constant velocity change does preclude being in orbit, but it also precludes remaining between Earth and Mars, since it would result in eventually leaving the solar system.--172.68.54.112 19:45, 24 July 2017 (UTC)

I can see both of your points. As mjm87 says, "between the Earth and Mars", taken literally, would mean "on a line between the two planets", which would be a very unusual orbit. And, I agree, it would be impossible without constant velocity changes, so wouldn't be an "orbit" in the usual sense. On the other hand, I took Russell's words the way Dgbrt seems to have, as meaning "between the orbits of Earth and Mars", as this is the way most astronomers would interpret it. A don't know that there are "many" asteroids that remain between Earth and Mars, but there are quite a few crossing the space, and at least a few with average distances in that range. - N Kalanaga 162.158.74.159 (talk) (please sign your comments with ~~~~)

- There is also quantifier scope ambiguity there. I believe that there is a large constellation of teapot statites, and at any given moment at least one of them is directly between Earth and Mars. --172.68.54.58 06:29, 22 July 2017 (UTC)

Since Russell was going for absurdity, I favour the more absurd interpretation namely Mjm87's. Capncanuck (talk) 08:21, 22 July 2017 (UTC)

- Taking "on a line between the two planets" literally would simply reduce to "inside the orbit of Mars". The Earth moves faster than Mars and right now the Sun is exactly between them on that line. NASA, ESA, and ISRO can not communicate with their orbiters and rovers until the beginning of August (see Solar conjunction). So the meaning "between the orbits of Earth and Mars" is still much more plausible.--Dgbrt (talk) 16:11, 22 July 2017 (UTC)

- What if it's in the Earth-Mars L1 point? Then it's always on a line between the two planets. Promethean (talk) 06:02, 26 July 2017 (UTC)

- Lagrangian points exist for Earth-Sun, Mars-Sun, or Moon-Earth (small object orbits a larger one). There is nothing similar for Earth-Mars. Earth moves faster around the sun and the closest approach happens every 26 months at a distance not less than 55 Mio. km. 13 months later the maximum distance is approx. 400 Mio. km and the sun is in the middle as it happens right now!--Dgbrt (talk) 13:46, 26 July 2017 (UTC)

Don't worry we have been working on it. Launching the project in a few months. https://www.instagram.com/p/BSmdiMSFBSb/?taken-by=hate_plow https://www.instagram.com/p/BSwW4MIlE0b/?taken-by=hate_plow Zackdougherty (talk) 03:10, 22 July 2017 (UTC)

- Actually, it couldn't be on a direct line between Earth and Mars because then it would be tremendously easier to find (or disprove)! If the teapot can be anywhere between the orbits, then that is a vastly larger space to look for a teapot and therefore more difficult to disprove. Similarly, it is unlikely there are a whole constellation because then it would be more likely to find at least one. 172.68.34.94 03:19, 25 July 2017 (UTC)

Could some people (smarter than myself) make an attempt at labeling the items on the cube sat that Randall left at squiggles? Maybe starting from the top, clockwise? I'll start a table, but I'm sure someone will need to fix it. DanB (talk) 03:24, 25 July 2017 (UTC)

- Personally, I suspect such a diagram wouldn't have the top labelled as "Teapot", but as "Payload". :) To me it looks even longer, so perhaps "Top Secret Specialty Payload" or something? NiceGuy1 (talk) 05:05, 1 August 2017 (UTC)

The title text refers to Russell's paradox, and it is funny that Russell came to it thinking about teaspoons : "The class of teaspoons, for example, is not another teaspoon, but the class of things that are not teaspoons, is one of the things that are not teaspoons." See https://math.stackexchange.com/questions/1046863/how-can-a-set-contain-itself for the exact source. 198.41.242.47 08:29, 28 July 2017 (UTC)

Amusingly Russell's original words, atleast as far as I've seen them quoted, literally described the teapot as being a planet. They stated something like "what if I said that orbiting the sun between Earth and Mars was a small planet the shape and size of a teapot...". The "thought experiment" dies a pretty quick death when you consider the current IAU definition of a planet, that it must be large enough to pull itself into a sphere from self-gravity(no marks for the teapot) and it needs to be gravitatonally dominant in it's orbital regions (no chance for something so low in mass), although that latter point tends to provoke the Pluto debate. Either way , by the strict definition, there isn't a teapot shaped "planet".Also if you don't call the teapot a planet, but do stick to Russell's words about an elliptical orbit you can probably calculate that something so small waving about between the orbits of Earth and Mars will end up being ejected due to a gravitational tug or resonance somewhere, probably from Jupiter (given Jupiter's mass it perturbs just about anything even when things are inside the orbit of Mars), once again profound philosophy gets an unfortunate surprise from orbital dynamics.162.158.154.91 23:39, 2 August 2017 (UTC)

- Nope. Russell said: "...a teapot orbits the Sun somewhere in space between the Earth and Mars." He didn't say the teapot is a planet. And in 1952 the IAU definition from 2006 didn't exist and Pluto was still a planet. --Dgbrt (talk) 18:55, 3 August 2017 (UTC)

How come that noone mentioned **418**? 172.68.226.16 18:43, 7 August 2017 (UTC)

Cheating solution to the barber paradox: due to a medical condition, the barber does not grow hair on any part of his body.

Solution to the NASA paradox: launch on a non-NASA vehicle, say SpaceX or Blue Origin. If you interpret that as NASA forbidding anyone to launch on a vehicle that don't launch themselves, have ESA launch it. If that isn't enough, have Virgin Orbital launch it from their system, which is "launched" on a jet aircraft and then uses rockets to complete orbital insertion. Nitpicking (talk) 14:50, 29 December 2022 (UTC)