2173: Trained a Neural Net

| Trained a Neural Net |

Title text: It also works for anything you teach someone else to do. "Oh yeah, I trained a pair of neural nets, Emily and Kevin, to respond to support tickets." |

Explanation[edit]

This is another one of Randall's Tips, this time an Engineering Tip.

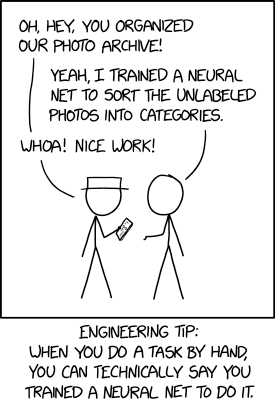

An artificial neural network, also known as a neural net, is a computing system inspired by a human brain, which "learns" by considering lots and lots of examples to develop patterns. For example, these are used in image recognition - by analyzing thousands or millions of examples, the system is able to identify particular objects. Neural networks typically function with no prior knowledge, and are "trained" by feeding in examples of the thing that they are told to analyze.

Here, Cueball is telling White Hat how he trained a neural net to sort photos into categories. The joke in the comic, is the engineering tip from the caption. It states that since a human brain is already a neural network, albeit a biological one instead of an artificial one, then by teaching oneself (or others) to do a task, you are de facto training a neural network to do so. So instead of designing and training an artificial neural net that could do this task, all Cueball did was manually sort the photos into categories (although he could then use those sorted images to train an artificial neural network).

It is not advisable to say this in real life, because you might then be expected to use your already-trained neural net to do a similar task (or redo the same task) with much greater speed, thus ruining the facade. However, presenting work done by humans as work done by machines has been done in real life, perhaps starting with the Mechanical Turk in 1770 and continuing into the present day by various AI-themed startups. For example, Engineer.ai described itself as using "natural language processing and decision trees" to automate app development, but was actually employing humans.

The title text is a continuation of this joke, as instead of designing and training two artificial neural nets named "Emily" and "Kevin", all he has done is train two people with those names to manually respond to support tickets. Again, doing this in real life is not advisable, as most people are offended when they are referred to by programmers as deterministic automata with no free will.[citation needed]

Neural networks have been trained to perform other tasks that are routine for humans, but formerly more difficult for computers, such as driving cars, playing games like chess, go, and Jeopardy!, and communication skills like extracting phonological information from speech as per Figure 1 here. In 1897: Self Driving, Randall suggested that crowdsourced applications like ReCAPTCHA, that have been used to train neural nets to recognize objects necessary for safe driving in photographs, may also be used for Wizard of Oz experiments. An example of such a Wizard of Oz experiment for phonological training as a form of peer learning has been explored, and related work is occurring on automating vocational training.

The extent to which computer neural nets are analogous to human neurobiology is a topic which fascinates the scientist and layperson alike. While there is no fully universal consensus on the matter, at least one apparently longstanding theoretical paradigm has received attention recently.

Transcript[edit]

- [White Hat is looking at a smartphone in his hand, while he talks to Cueball, who lifts a hand palm up towards White Hat.]

- White Hat: Oh, hey, you organized our photo archive!

- Cueball: Yeah, I trained a neural net to sort the unlabeled photos into categories.

- White Hat: Whoa! Nice work!

- [Caption below the panel:]

- Engineering Tip: When you do a task by hand, you can technically say you trained a neural net to do it.

Trivia[edit]

- Cueball is depicted abusing the training of such a chatbot in 1696: AI Research.

Discussion

Of course it's cheating as human's neural nets came pre-trained. I mean, unless you trained infant to do it, and even then, some things in image recognition are hardwired. In any contest between modern software and infant in face recognition or "is that face happy" recognition, I'm betting on infant. -- Hkmaly (talk) 21:03, 8 July 2019 (UTC)

- Face recognition might be innate, but higher level tasks are not. You're not born knowing how to ride a bicycle or do algebra (there may be some simple counting circuits in the brain), your neural network has to be trained so you can do these.Barmar (talk) 22:04, 8 July 2019 (UTC)

Ahh -- a short and sweet comic and explanation! I'd propose not bloating the explanation too much; the joke has been explained perfectly fine already. 172.68.51.16 22:16, 8 July 2019 (UTC)

Perhaps we should just all adhere to Randall's own advice in 1475:Technically:

- 'My life improved when I realized I could just ignore any sentence that started with "technically."'

162.158.154.115 11:36, 9 July 2019 (UTC)

- But this one doesn't start that way. 141.101.99.77 14:41, 9 July 2019 (UTC)

- Technically correct is only technically the best kind of correct during the all-but two week window when astrology doesn't work. 172.68.141.82 18:13, 9 July 2019 (UTC)

Yay! We trained a neural net to explain XKCD Elektrizikekswerk (talk) 13:23, 9 July 2019 (UTC)

I'm not convinced that the paragraph on the neural net for answering questions about Wikipedia content is helpful at explaining the comic, but I am convinced that including 6 separate links within that short paragraph is entirely disruptive to that goal. Either the quantity of links should be severely curtailed or the paragraph needs to be removed from the explanation! Ianrbibtitlht (talk) 19:38, 9 July 2019 (UTC)

- I'm a little concerned that you called it spam, given Randall's affinity for Wikipedia, and it being the best example. Can we workshop it here? I am happy to replace the many links to one at an intermediate page, e.g.[1] 172.68.189.91 23:40, 9 July 2019 (UTC)

- I didn't call it spam - the editor who removed it used that term. I just felt the nearly continuous links were a little excessive and made it difficult to read. It might be appropriate in an added "Trivia" section at the end of the explanation, with just a link or two. Ianrbibtitlht (talk) 13:10, 10 July 2019 (UTC)

- I understand Randall's affinity for Wikipedia, but I don't believe that this neural net paragraph is helpful to the comic explanation. The Wikipedia neural net is not mentioned int he comic, and there is no indication that Randall was thinking about the Wikipedia neural net when he was creating the comic. I will move this to a Trivia section. 162.158.58.169 17:43, 10 July 2019 (UTC)

- I didn't call it spam - the editor who removed it used that term. I just felt the nearly continuous links were a little excessive and made it difficult to read. It might be appropriate in an added "Trivia" section at the end of the explanation, with just a link or two. Ianrbibtitlht (talk) 13:10, 10 July 2019 (UTC)

This explanation uses two distinct definitions of "neural net". The first is the computer science algorithm called a neural net, and the second is net of neurons that is the human brain. We do not know how the human brain works -- artificial neural nets may or may not be a good simulation. However there is a long history of saying that a brain works like the most complex piece of technology of the day (railroad network, switchboard, computer). So far all of these explanations have been largely wrong (but slightly useful to various degrees). I suppose we'll get it right eventually, but there is no certainty we are correct today. 162.158.75.52 02:59, 11 July 2019 (UTC)

- Indeed it does. I added material to address that aspect. 172.68.133.222 09:46, 11 July 2019 (UTC)

Uh, I thought the joke is that to train a real neural net you need to feed it accurate information. In the end you end up doing the work and you may need to "fine tune" by keeping doing the work of the AI. That is if you do it yourself, and do not have a large enough sample to train the net.

- There are both supervised and unsupervised forms of learning. 172.68.189.67 00:00, 26 July 2019 (UTC)

Add comment

Add comment