2318: Dynamic Entropy

| Dynamic Entropy |

Title text: Despite years of effort by my physics professors to normalize it, deep down I remain convinced that 'dynamical' is not really a word. |

Explanation[edit]

This is another one of Randall's Tips, this time a Science Tip. This time it is a bit special since it came less than three weeks after another Science Tip: 2311: Confidence Interval (which was itself the first time that a non-Protip Tip type has been re-used). This is the first time a type of tip (that was not a Protip) has been used for two "tips comics" in a row.

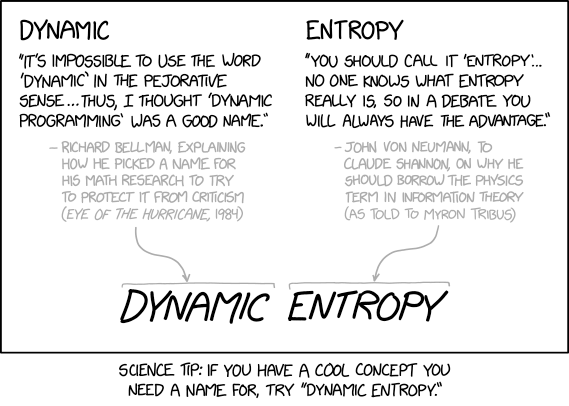

This Science Tip suggests that if you have a cool new concept, you should call it dynamic entropy.

Dynamic programming is a mathematical optimization method and computer programming method developed by Richard Bellman in the 1950s. The History section of the Wikipedia article contains the full paragraph from Bellman's autobiography that contains the quote that is in the comic strip. Bellman describes how he was doing mathematical research funded by the military at a time when the Secretary of Defense had a literal pathological fear of the word "research", and by extension, "mathematical". Bellman borrowed the word "dynamic" from physics as being both accurate for his work and as a word that in plain English has positive connotations and is never used in a pejorative sense (expressing contempt or disapproval). The word "dynamic" itself comes from the Greek dynamikos, "powerful", which is a positive meaning in itself, and has been applied to topics in physics that are related to motion and forces and used in ordinary English to refer to things that exert power, force, growth, and change (dynamo, dynamite, and as an adjective). Even though those things aren't always good, when they're bad, we use other words instead (e.g. cancer undergoes metastasis, not "dynamism").

Entropy is a term from physics, specifically statistical mechanics, describing a property of a thermodynamic system. When Claude Shannon developed a mathematical framework for studying signal processing and communications systems, which became known as Information theory, he struggled to come up with a proper name for one mathematical concept in his theory that quantified amount of noise or uncertainty in a signal. Computer scientist John von Neumann noticed the similarity of the equations with some in thermodynamics and suggested, "You should call it entropy, for two reasons. In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, no one really knows what entropy really is, so in a debate you will always have the advantage." (see History of information theory). The following is an excerpt from the explanation of 1862: Particle Properties:

The term "entropy", which began as a thermodynamic measure, has since been adopted by analogy into multiple seemingly unrelated domains including, for example, information theory. The table allows that the term "entropy" must mean something in the context of particle physics, but isn't certain whether it's the classical, Gibbs' modern statistical mechanics, Von Neumann's quantum entropy, or some other meaning.

In classical thermodynamics, entropy is a macroscopic property describing the disorder or randomness of a system with many particles. However, in statistical mechanics and quantum mechanics, the concept of entropy can also be applied to single particles under certain conditions. If the particle's position is not precisely known and can be described by a probability distribution, this contributes to entropy. Similarly, if the particle's momentum is uncertain and described probabilistically, this also contributes to entropy. A single quantum particle in a pure state (e.g., an electron in a specific atomic orbital) has zero entropy. This is because there is no uncertainty about the state of the system. If the single particle's state is described by a density matrix representing a mixed state (a probabilistic mixture of several possible states), the Von Neumann entropy can quantify the degree of uncertainty or mixedness of the state.

Imagine two identical balloons filled with the same gas and heated from two opposite sides with identical heat sources, creating symmetric temperature gradients in both; because the distribution of temperatures is the same, the Gibbs statistical thermodynamic entropy 𝑆 of the gas molecule particles in each balloon will be the same. In contrast, if one balloon is heated by a low-power heat source and another by an otherwise identical high-power heat source, the balloon next to the high-power heat source will have a steeper temperature gradient, increasing the number of accessible microstates, so the Gibbs entropy 𝑆low_power < 𝑆high_power. Now consider electrons in two atoms excited by absorbing identical photons to a mixed state; if the mixed states have the same probabilities for different energy levels, their Von Neumann quantum entropy 𝑆 values will be the same. Conversely, if one atom has electrons excited to a pure state and another to a mixed state by photons of different energies, the mixed state will have higher entropy due to greater uncertainty, i.e., 𝑆pure = 0 and 0 < 𝑆mixed ≤ ln(2).

The naming of dynamic programming and of entropy in information theory are both examples of scientists choosing a name for what were at least partially very non-scientific seeming reasons. In one case because it has only positive and no negative connotations in plain English. In the other case because there is much confusion over the meaning of the word so Shannon would be free to adopt it in a new context. Randall is claiming that would make them great to put together to name some new concept; the combination will mean whatever the creator wants it to mean (even able to change mid-debate), and never sound bad the way that e.g. cold fusion has come to be.

Even though the caption implies that "dynamic entropy" would be available as a new name, it has actually been used in physics[1], probability[2], computer science[3], and even the term "dynamical entropy" in physics[4][5] and bioscience[6].

In the title text Randall mentions that, even though his physics professors have continued to use the word "dynamical", "trying to normalize it" by repetitive usage, he remains convinced that it is not really a word. Presumably he doesn't like that it has two suffixes used to make words into adjectives, -ic and -al, as if "dynamic" wasn't already positive enough. The Free Dictionary discusses how -ic and -ical suffixes are confused in many common words and explains their different uses.

The term "dynamical" in physics generally is used in "Dynamical system" or as an adjective to name a concept as applied to dynamical systems such as "dynamical entropy"[7].

Transcript[edit]

- [One panel only with text and a few lines and arrows. There are two columns each with a heading. Beneath each heading is a quote written on four lines. Below the quote, in grey font, and indented, starting with a hyphen, with the text aligned to the right of this are five lines of text. This explains who the quote belongs to and where it was stated (in brackets at the end). From the bottom of each of these two gray text paragraphs gray curved arrows goes down to two gray lines. Below each of these two lines are one large word per line. They are again in black text.]

- Dynamic

- "It's impossible to use the word 'dynamic' in the pejorative sense... Thus, I thought 'Dynamic Programming' was a good name."

- - Richard Bellman, explaining how he picked a name for his math research to try to protect it from criticism (Eye of the Hurricane, 1984)

- Entropy

- "You should call it 'Entropy'... No one knows what entropy really is, so in a debate you will always have the advantage."

- - John von Neumann, to Claude Shannon, on why he should borrow the physics term in information theory (as told to Myron Tribus)

- Dynamic Entropy

- [Caption below the panel:]

- Science Tip: If you have a cool concept you need a name for, try "Dynamic Entropy."

Trivia[edit]

Many of Buckminster Fuller's designs and works were associated with the word "dymaxion", a combination of the words "dynamic", "maximum", and "tension", all words that Fuller himself used a lot in talking about his work, and which are words that simultaneously have use in science and positive connotations in lay English.

References[edit]

- ↑ Allegrini, P., Douglas, J. F., & Glotzer, S. C. (1999). Dynamic entropy as a measure of caging and persistent particle motion in supercooled liquids. Physical Review E, 60(5), 5714, doi: 10.1103/physreve.60.5714.

- ↑ Asadi, M., Ebrahimi, N., Hamedani, G., & Soofi, E. (2004). Maximum Dynamic Entropy Models. Journal of Applied Probability, 41(2), 379-390. Retrieved June 11, 2020, from www.jstor.org/stable/3216023

- ↑ S. Satpathy et al., "An All-Digital Unified Static/Dynamic Entropy Generator Featuring Self-Calibrating Hierarchical Von Neumann Extraction for Secure Privacy-Preserving Mutual Authentication in IoT Mote Platforms," 2018 IEEE Symposium on VLSI Circuits, Honolulu, HI, 2018, pp. 169-170, doi: 10.1109/VLSIC.2018.8502369.

- ↑ Green, J. R., Costa, A. B., Grzybowski, B. A., & Szleifer, I. (2013). Relationship between dynamical entropy and energy dissipation far from thermodynamic equilibrium. Proceedings of the National Academy of Sciences, 110(41), 16339-16343.

- ↑ Słomczyński, W., & Szczepanek, A. (2017). Quantum dynamical entropy, chaotic unitaries and complex Hadamard matrices. IEEE Transactions on Information Theory, 63(12), 7821-7831, doi: 10.1109/TIT.2017.2751507.

- ↑ Chakrabarti, C. G., & Ghosh, K. (2013). Dynamical entropy via entropy of non-random matrices: Application to stability and complexity in modelling ecosystems. Mathematical biosciences, 245(2), 278-281, doi: 10.1016/j.mbs.2013.07.016.

- ↑ Atmanspacher, H. (1997) "Dynamical entropy in dynamical systems," in Time, temporality, now (pp. 327-346). Springer, Berlin, Heidelberg, doi: 10.1007/978-3-642-60707-3_22

Discussion

Can confirm, have never lost an argument. Dynamic Entropy (talk) 00:45, 11 June 2020 (UTC)

- You came here for an argument? No, this here is abuse. Argument's next door. Kev (talk) 10:26, 13 June 2020 (UTC)

- Allegrini, P., Douglas, J. F., & Glotzer, S. C. (1999). Dynamic entropy as a measure of caging and persistent particle motion in supercooled liquids. Physical Review E, 60(5), 5714, doi: 10.1103/physreve.60.5714.

- Asadi, M., Ebrahimi, N., Hamedani, G., & Soofi, E. (2004). Maximum Dynamic Entropy Models. Journal of Applied Probability, 41(2), 379-390. Retrieved June 11, 2020, from www.jstor.org/stable/3216023

- Green, J. R., Costa, A. B., Grzybowski, B. A., & Szleifer, I. (2013). Relationship between dynamical entropy and energy dissipation far from thermodynamic equilibrium. Proceedings of the National Academy of Sciences, 110(41), 16339-16343.

- S. Satpathy et al., "An All-Digital Unified Static/Dynamic Entropy Generator Featuring Self-Calibrating Hierarchical Von Neumann Extraction for Secure Privacy-Preserving Mutual Authentication in IoT Mote Platforms," 2018 IEEE Symposium on VLSI Circuits, Honolulu, HI, 2018, pp. 169-170, doi: 10.1109/VLSIC.2018.8502369.

- Bugstomper (talk) 01:28, 11 June 2020 (UTC)

- Can someone with knowledge of the reference system in a wiki make the reference appear above the discussion, maybe in a section named References?--Kynde (talk) 07:06, 11 June 2020 (UTC)

- Done Bugstomper (talk) 09:13, 11 June 2020 (UTC)

- Can someone with knowledge of the reference system in a wiki make the reference appear above the discussion, maybe in a section named References?--Kynde (talk) 07:06, 11 June 2020 (UTC)

Well bugger me (METAPHOR! METAPHOR!) but my current Master thesis in Computer Science could use that term without much shoehorning. (tl;dr: Binary search trees that adapt, =dynamic, can serve a query series faster than static, and the gain depends on the structure of the query series, =entropy. I prefer the good old "instance optimality", though...) 162.158.159.122 08:58, 11 June 2020 (UTC)

This seems to tie in with the recent comic 2315: Eventual Consistency, which is also about entropy (in a thermodynamic(al) sense), but I guess that like the rest of the world I don't know what entropy really is, because if entropy is a measure of how "surprising" a variable is, why is everything being flat and spread out evenly called a state of maximum entropy? Everything being the same doesn't sound very surprising to me... --IByte (talk) 09:08, 11 June 2020 (UTC)

- Because entropy is the inverse of how "surprising" or organized or full of information a system is. Bugstomper (talk) 09:27, 11 June 2020 (UTC)

- It's not about "surprising" but about an even spread of probability, so for matter a complex molecule has less entropy than a smaller molecule because the atoms are held in place, and if the quarks in the atoms aren't even held together in subatomic particles then that is ultimate entropy. For information having more possible choices for the message or password spreads the probability of any one occurrence around more possibilities. If it is narrowly defined it has low entropy because the probability is concentrated in a few items.162.158.75.104 13:53, 11 June 2020 (UTC)

I can think of a number of cases where "dynamic" would be a bad thing, but not necessarily pejorative. The structure of a building had better not be dynamic (think "sudden energetic disassembly"), and when my (salaried, should be steady) paycheck becomes dynamic, I have to talk to HR. Can someone come up with a pejorative? 162.158.78.212 11:16, 11 June 2020 (UTC)

- 2050 retro slang: Dynamic, variant of Dienamic, portmanteau of Die and (Viet) Nam + adjectival suffix // Dienam (n): a long, brutal slog with an unsatisfying ending, possibly with unintended consequences arising some time after the conclusion (cv Agent Orange) "Just like the soldiers a century ago, they knew the project was a dienam -- resources would be wasted, careers ended, and goals unmet -- but they couldn't convince the executives to abandon the project." // Some[thing] that is a failure, many years in the making, from which no success can be extricated. "[The development team] was working on a dynamic project for the studio when [the studio] finally was forced to declare bankruptcy and shutter due to literally years of overcomitting and underdelivering." 162.158.75.104 (talk) (please sign your comments with ~~~~)

As there exists such a thing as resilience by explicitness, when it comes to type system discussions in computer programming language design the term 'dynamic' can be pretty condemning. 141.101.76.204 (talk) (please sign your comments with ~~~~)

(Looks like people aren't ~~~~ing. My contribution starts here.) I can just see a reporter, at the scene of some breaking news, saying "It's very much a dynamic situation here", while dodging various rioter/police missiles, hurricane debris, moving away from a wildfire front or in the middle of a rescue situation in post-earthquake aftermath yet still amidst aftershocks. 141.101.107.164 05:28, 12 June 2020 (UTC)

The 'Use “-ic” with nouns ending in “-ics” (usually)' section of that Free Dictionary article is relevant here, since dynamics is a thing. 172.69.62.148 15:28, 12 June 2020 (UTC)

Dynamic gravity, dynamic oxygen level, dynamic surgical compentency, dynamic astronomical unit ... none of these are desireable. These Are Not The Comments You Are Looking For (talk) 20:18, 15 June 2020 (UTC)